- Home

- Services

- About

- News

- Contact

- Mandrake hotels london

- Www flightcheck

- Sononym for term

- Guitar hero metallica xbox 360 bundle

- Mp3 rhino free download

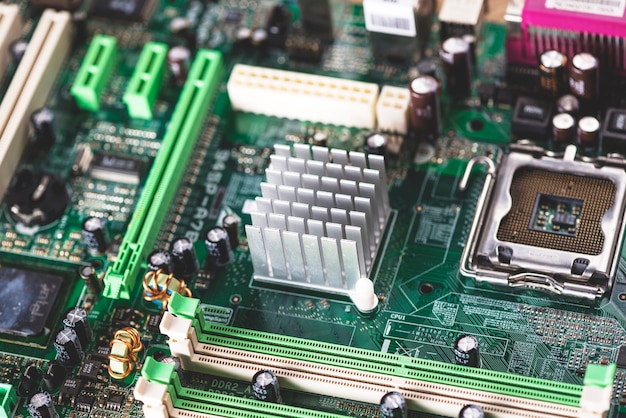

- Softraid overhead cpu

- Mio console surround

- Mozilla thunderbird vs outlook

- Bill of sale

- Watch a perfect day online

- Longer math vocabulary teacher videos

- Mortimer beckett time paradox game walkthrough

- Exped deepsleep mat 7-5 sleeping pad

- Extended shadowrun character sheet

However it's basically boils down to latency of IO and concurrent data transfer. But I would suggest have a look at RAID2 and RAID3 - both types that are rarely used - to get an understanding of the nature of the problem.

If the writes were optimized, then the minimum stripe size will always be the best: RAID 6 with 4 disks with 4 k sectors will lead to 8 kb stripes, and it will be the best for read and write througput for every possible load.Īs with all things, there's a happy medium. If they where cached, the overhead will only be some CPU and memory, but my own benchmarks show CPU remain under 10%, and the I/O is the bottleneck. I didn'd dug enough to understand why those repeated writes are not correctly cached, and the data flushed to disk only after several seconds (so only once per stripe). So we have a small overhead for overwriting into memory and computing parity, but a huge overhead because the data is written again to the same stripe. When writing the next 100 bytes, only the "read the 512 kb stripe" is avoided because the data is in the kernel cache.

#Softraid overhead cpu driver#

With writes smaller than the stripe size, the md driver first read the full stripe into memory, then overwrite in memory with the new data, then compute the result if parity is used (mostly RAID 5 and 6), then write it to the disks.įor example I write 1 Gb of data 100 bytes at a time: the kernel will first read the 512 kb stripe, overwrite the required parts in memory, compute the result if parity is involved, then write it to the disk. This is the exact same thing as reading from a non RAID partition. So the next 100 bytes will already be in kernel cache. So several contiguous reads only generate a small overhead for CPU and memory, compared to the slow disk bandwidth.įor example I read 1 Gb of data 100 bytes at a time: the kernel will first transform it to a 512 kb read because this is the minimum I/O size (if the stripe size is 512 kb). With small reads there is no problem because the data is in the kernel cache after the first read. If the read or write is accross 2 stripes, the md driver does the job correctly: everything is handled in one operation. This lead to massive overhead in some common situations.īig reads or writes are optimized: they are cut down to several requests egal to the stripe size, and treated optimaly.

When you look into the code, you see the md driver is not fully optimized: when multiple contiguous requests are made, the md driver doesn't merge then into a bigger one. So once again, how a small stripe size could slow down this ? Ideally a stripe size of 4 kb should be the best with modern disks (if correctly aligned). The smaller the stripe size, the faster the data is read/computed/written. Then the heads move elsewhere and the operation is repeated 999,999 more times. For each write, the kernel has to read the current data on disk, compute the new content, and write it back to the disk. The program asks the kernel to write some data, then some other data, and again some other, etc. How a small stripe size could slow down this ?Ĭase 2: we write 1,000,000 x 1 kb of data at random placesġ kb is smaller than the stripe size (common stripe size is currently 512 kb) So this is basically a sequential write on 4 disks. All other stripes will only be overwritten without any care for previous data.īecause this computing is done much faster than disks throughput, each chunk is just written next to the previous one on each disk without pause. If the written data is not fully aligned with the stripes, the kernel has only to read the first and last stripes before computing the resulting data. The kernel is able to write to the 4 disks at the same time (with the proper disk controler). The program ask the kernel to write the data, then the kernel divide it to match the stripe size and compute each chunk (data and/or parity) to be written to each disk. Let's say we have a RAID 6 setup with 4 local SAS drives. I just don't understand how a small stripe size lead to more head movements. The rare benchmarks I saw fully agree with that.īut the explanation given by everybody is this induce more head movements.

#Softraid overhead cpu software#

I read here and then that small stripe size is bad for software (and maybe hardware) RAID 5 and 6 in Linux.

- Home

- Services

- About

- News

- Contact

- Mandrake hotels london

- Www flightcheck

- Sononym for term

- Guitar hero metallica xbox 360 bundle

- Mp3 rhino free download

- Softraid overhead cpu

- Mio console surround

- Mozilla thunderbird vs outlook

- Bill of sale

- Watch a perfect day online

- Longer math vocabulary teacher videos

- Mortimer beckett time paradox game walkthrough

- Exped deepsleep mat 7-5 sleeping pad

- Extended shadowrun character sheet